This post explores research originally presented at the CVPR 2025 Workshop on Synthetic Data for Computer Vision (SynData4CV).

TL;DR

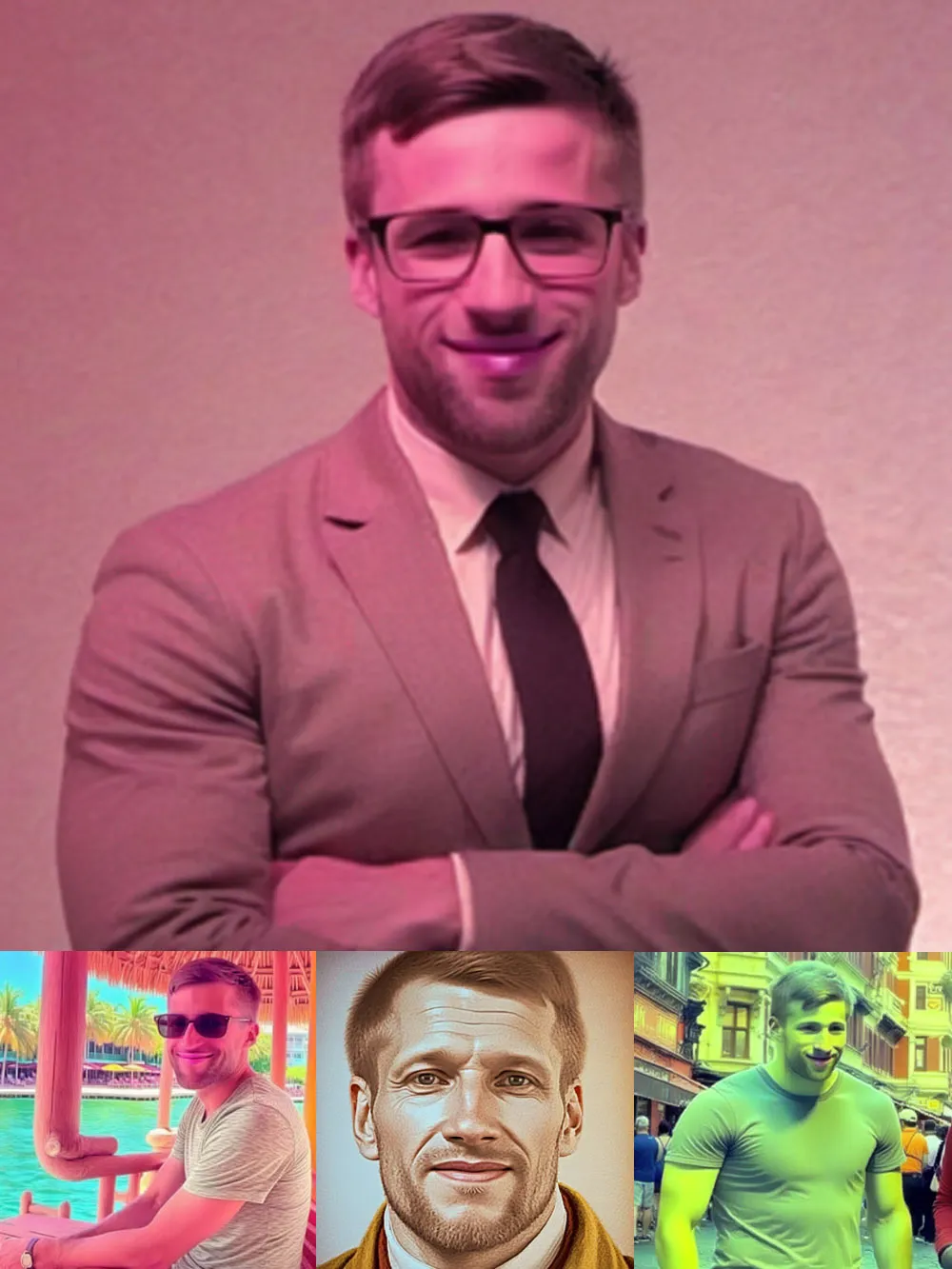

Instead of using “classical” image tweaks like flipping or rotating, which actually distort facial identity, this research proves that using InstantID to generate high-quality synthetic portraits as training data significantly improves a DreamBooth model’s ability to produce realistic, professional-grade headshots.

The Problem with Few-Shot AI Portraits

You have five casual phone photos and want a polished LinkedIn headshot. Sounds like a job for a text-to-image model, right? In theory, yes. In practice, personalized diffusion models like DreamBooth struggle with a bottleneck of identity retention: they need to learn who you are from a tiny handful of images, then generalize that identity to entirely new scenes and styles.

This is the few-shot personalization problem. It sits at the tension between two competing goals: identity retention (the output should actually look like you) and recontextualization (you should be placeable in any scene the user prompts). Most standard training pipelines lean hard in one direction or the other. My research, published as “Generating Synthetic Data via Augmentations for Improved Facial Resemblance in DreamBooth and InstantID”, investigates a third path: using one generative model to improve the training of another.

Why Classical Augmentations Backfire

When deep learning practitioners want more training data, the first instinct is to reach for classical augmentations: random flips, crops, rotations, colour jitter. These are reliable staples for large-scale classification tasks. For few-shot face personalization, they are a trap.

Geometric traps

Random Horizontal Flip seems harmless, but faces are subtly asymmetric. A mole on the left cheek, a slightly crooked smile, the direction of a part in the hair: flipping these teaches the model a second identity that contradicts the first. Rather than generalizing, the model averages them into an uncanny composite.

Random Rotation introduces black padding bars around the frame, which the model dutifully learns as part of the subject’s visual signature. It also misaligns facial landmarks, undermining the spatial consistency that makes face generation coherent.

Colour confusion

Colour Jittering (tweaking brightness, contrast, saturation, and hue) causes the model to incorrectly associate those shifts with the rare token representing your subject. The result is erratic generations where the subject might appear with an alien skin tone or under lighting that was never in any real photograph.

Segmentation imperfections

Replacing backgrounds using a segmentation model like -Net sounds like a clean solution to background leakage. In practice, the segmentation boundary around fine hair creates a blended halo artifact. The model then learns that wispy, semi-transparent fringe is part of the subject’s identity, making clean background swaps nearly impossible downstream.

The pattern is the same across all of these: classical augmentations introduce distributional artifacts, and the model, with no other signal to reject them, faithfully memorizes those artifacts as identity-defining features.

Classical augmentations introduce distributional artifacts. With no other signal to reject them, the model faithfully memorizes these artifacts as identity-defining features.

A New Approach: GenAI Improving GenAI

Instead of perturbing real images in ways that corrupt facial structure, the approach explored in this paper asks a different question: what if the augmented images were themselves high-quality generations of the person, produced by a model that already understands faces?

The answer is generative augmentation via InstantID. By conditioning InstantID on a subject’s facial landmarks and a set of reference images, we can synthesize diverse, photo-realistic portraits of that person across varied poses, lighting conditions, and contexts, all while preserving the structural integrity of their face. These synthetic images already live in the diffusion model’s feature space, so DreamBooth does not have to reconcile the domain gap that plagues classical augmentations.

The result is measurably better facial resemblance in the fine-tuned DreamBooth model, with the full range of recontextualization still intact.

Practical Takeaways

These findings translate directly into actionable recommendations for anyone building portrait personalization pipelines.

1. Balance real and synthetic images

The most important constraint for preventing overfitting is dataset diversity. No single concept (a specific background, a particular outfit, a generated style) should represent more than 25% of your training set. When synthetic images crowd out real ones, the model loses its grip on genuine identity and begins to replicate InstantID’s stylistic fingerprint rather than the subject’s actual face.

2. The Rule of Four

When generating synthetic training data with InstantID, providing four reference images offers the best trade-off between usability and facial similarity. Fewer references produce inconsistent identity across generations; more references yield diminishing returns and increase annotation overhead.

3. Resolution matters

Images around 1 megapixel align with the native training resolution of SDXL and deliver the best qualitative results. Upscaling smaller images introduces compression artefacts; downscaling large images discards high-frequency facial detail. If your source photos are from a phone camera, a light centre-crop to roughly 1024 × 1024 is ideal.

4. Skip the flips, rotations, and jitter

Given the evidence above: do not use Random Horizontal Flip, Random Rotation, or Colour Jitter in the fine-tuning pipeline. Their well-known benefits for large-scale classification tasks do not transfer to few-shot face personalization.

Measuring Resemblance: The FaceDistance Metric

Qualitative “vibes” are a start, but human intuition is subjective. To systematically rank checkpoints and understand how synthetic data actually moves the needle, we needed a reproducible, automated metric. This led to the development of FaceDistance, a validation tool built on FaceNet embeddings.

Rather than looking at pixels, FaceDistance looks at geometry. It projects facial images into a 128-dimensional hyperspherical space where the distance between points reflects perceptual similarity. Specifically, the metric calculates the average cosine distance between a generated image and the set of original reference images :

Definition: Given batches of generated images and original reference images , the FaceDistance is defined as:

Breaking down the logic:

- The Encoder (): We use MTCNN for precise face detection, followed by FaceNet to extract the identity embedding.

- The Distance (): We use cosine distance, clipped to a range for numerical stability.

A lower FaceDistance score indicates a stronger mathematical resemblance to the subject.

Pro Tip: FaceDistance acts more like a high-pass filter than a perfect judge. It is excellent for identifying “catastrophic drift” (where the model loses the subject entirely) but it isn’t sensitive enough to decide if a “good” image is “great.”

In our pipeline, we found that simply discarding the top 15% of highest-distance embeddings from the training set (in cases with references) consistently led to cleaner, more recognizable results.

The Human Test: Does It Fool Real People?

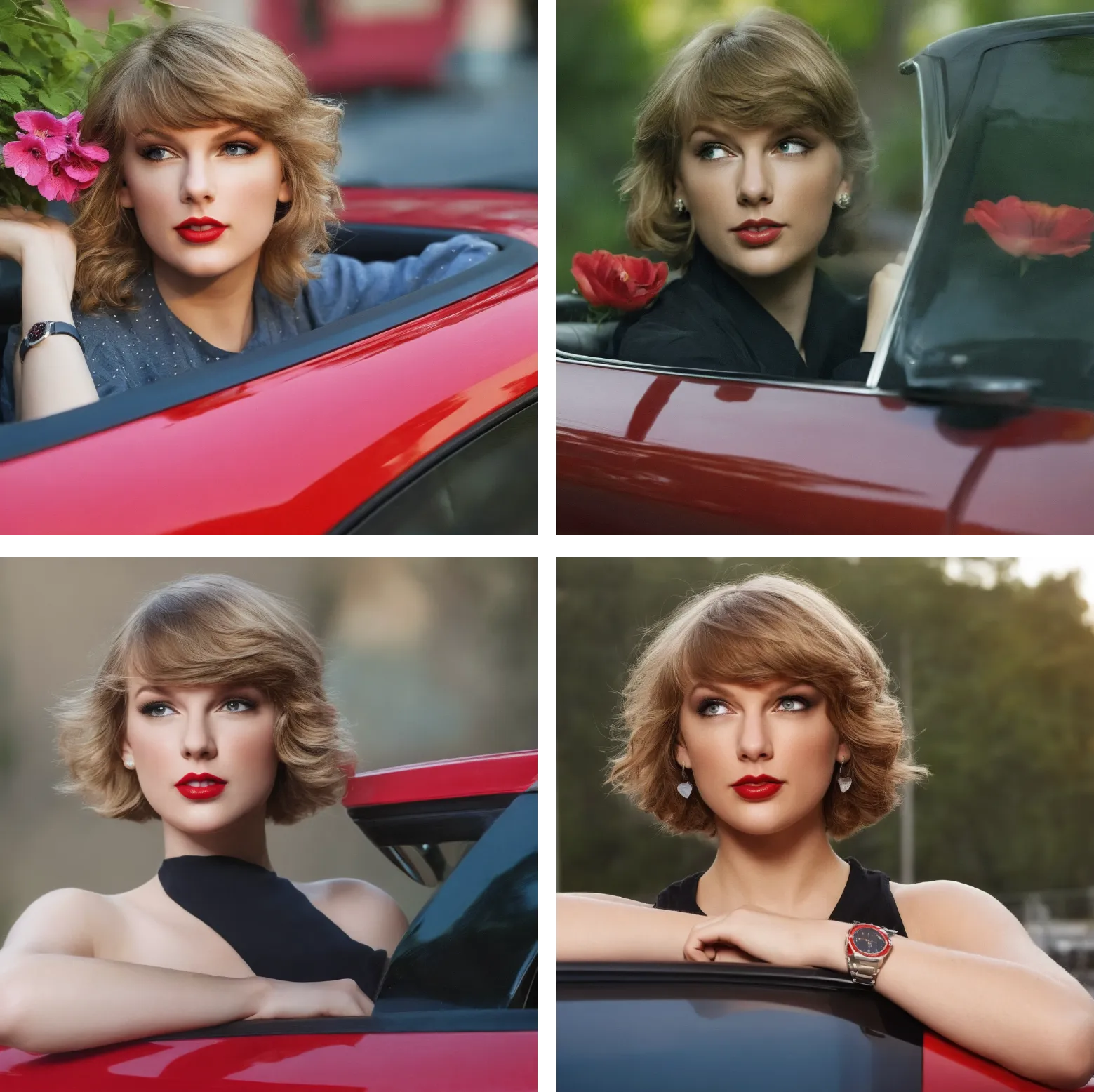

Metrics only go so far. To validate that these portraits actually pass muster in professional contexts, the study recruited 97 white-collar workers to evaluate the generated headshots. Both DreamBooth and InstantID produced portraits that were frequently indistinguishable from genuine professional photographs.

Participants’ preferences split along an interesting fault line. Those who valued identity accuracy (“does this actually look like the person?”) tended to prefer DreamBooth outputs. Those drawn to overall aesthetics favoured InstantID for its polished, retouched quality. Neither model dominated on all dimensions, which points to a useful practical heuristic: use DreamBooth-with-generative-augmentation when fidelity to a specific individual is paramount, and use InstantID directly when a studio-quality aesthetic matters more than strict identity retention.

Conclusion

Classical augmentations are not universally beneficial. For few-shot face personalization, several common techniques actively degrade output quality. Replacing them with generative augmentation, where InstantID synthesizes diverse but identity-consistent training images, closes the gap between a handful of casual snapshots and a high-fidelity professional portrait.

The broader takeaway extends beyond portraits: synthetic data is not just a fallback for when real data is scarce. It is a tool for shaping precisely what a model learns. As generative models improve, training pipelines that use one model to curate data for another will become increasingly common.

Acknowledgments

This work would not have been possible without the dedicated mentorship of Benjamin Kiefer. Beyond steering the technical direction of this research, Benjamin was a constant guide through the often-turbulent process of publishing my first paper. His attentiveness during our weekly meetings and his rigorous feedback were fundamental to the success of this project. I am deeply grateful for his support in turning these initial ideas into a peer-reviewed publication.

My thanks also go to Naman Deep Singh for valuable insights into image copyright. His guidance helped clarify the complexities of dataset curation and provided a clear understanding of the legal boundaries for image usage.

I am also grateful to the CVPR SynData4CV workshop reviewers for their constructive comments.

Citation

If you build on this work or wish to explore the full list of references and literature supporting this research, please refer to the formal paper:

Ulusan, K., & Kiefer, B. (2025). Generating synthetic data via augmentations for improved facial resemblance in DreamBooth and InstantID [Paper presentation]. CVPR Workshop on Synthetic Data for Computer Vision (SynData4CV), Nashville, TN, United States. https://arxiv.org/abs/2505.03557

@inproceedings{ulusan2025generating,

author = {Ulusan, Koray and Kiefer, Benjamin},

title = {Generating Synthetic Data via Augmentations for Improved Facial Resemblance in DreamBooth and InstantID},

booktitle = {Proceedings of the CVPR 2025 Workshop on Synthetic Data for Computer Vision (SynData4CV)},

year = {2025},

month = {May},

url = {https://arxiv.org/abs/2505.03557},

note = {Accepted to the CVPR 2025 SynData4CV Workshop},

eprint = {2505.03557},

archiveprefix = {arXiv},

primaryclass = {cs.CV},

doi = {10.48550/arXiv.2505.03557}

}- The full paper is available at arXiv:2505.03557

- Project webpage is synthetic-face-augmentation.github.io