TL;DR

I modified TD3 with three reinforcement learning techniques: Prioritized Experience Replay (PER), potential-based reward shaping, and multi-step returns. I used these to train an agent in a simulated air hockey game. Most modifications made things worse. What actually worked was a curriculum: pre-training on shooting and defending modes before facing the real opponent. The final agent wins 98.3% of games against the strong built-in opponent.

The Problem: Sparse Rewards in a Competitive Environment

Air hockey is a hard environment for RL. Goals are rare and delayed, preceded by a long sequence of positioning decisions that receive no direct reward signal. The agent needs to learn to move toward the puck, hit it in the right direction, and coordinate defense and offense, all from a reward that stays at zero until something decisive happens.

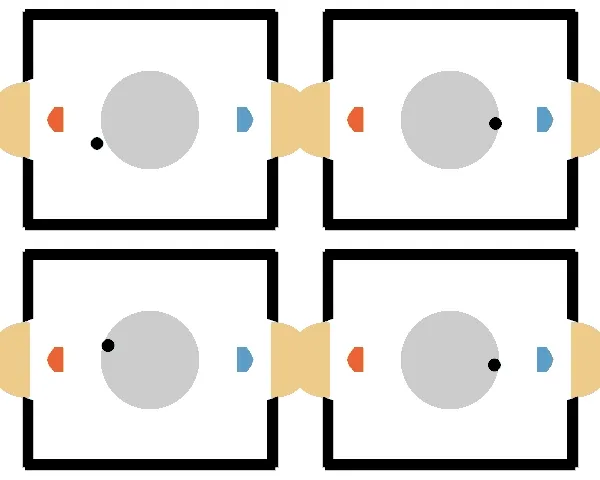

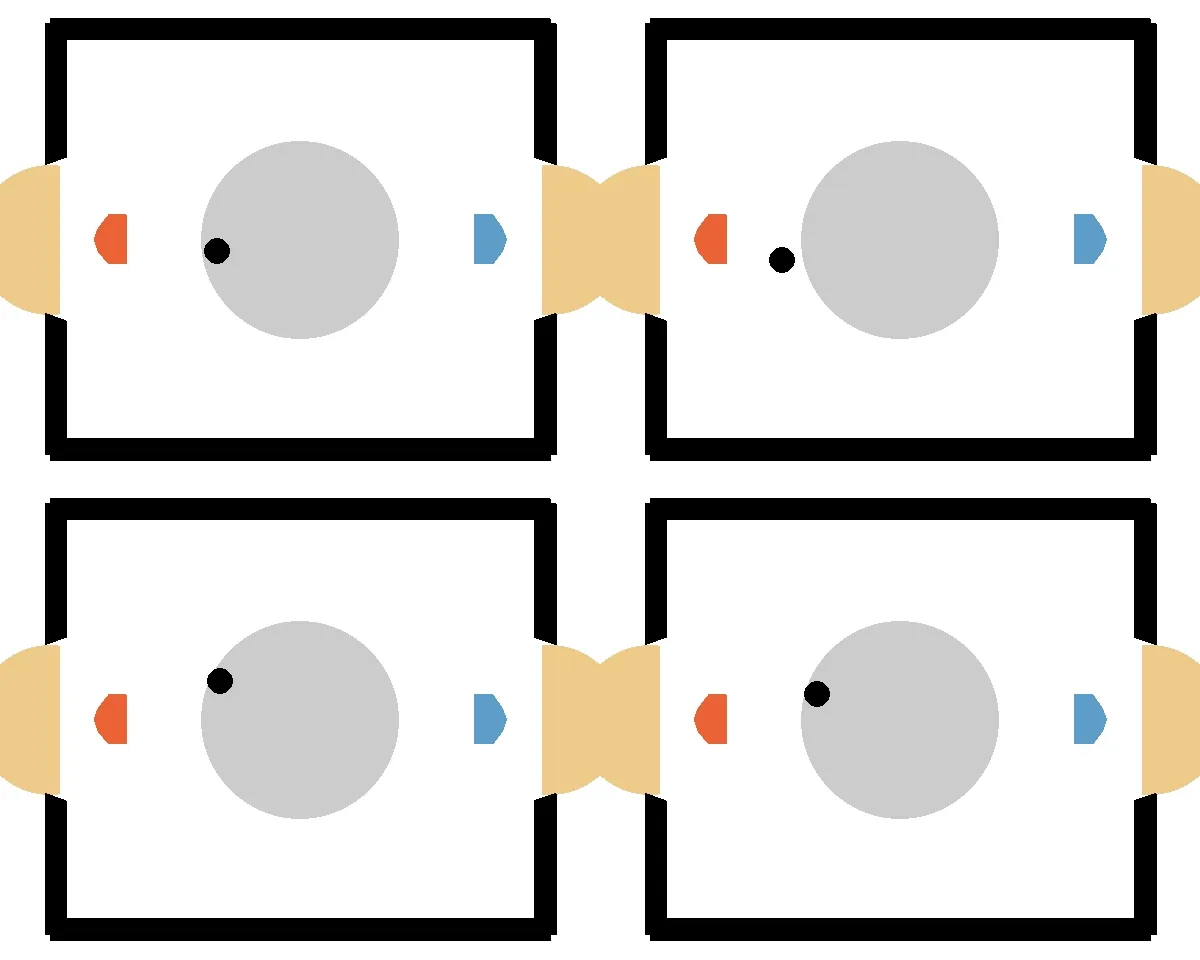

The environment I used is HockeyEnv (a.k.a. “Laser Hockey”), a Box2D/Gymnasium simulation of a two-player air hockey game. The observation space is 18-dimensional (positions, velocities, angles of both player and puck), and the action space is a 4-dimensional continuous vector covering movement and shooting. Each episode runs for up to 250 timesteps in normal mode, or a shorter 80-step window in dedicated shooting/defending training modes.

The standard “run a good off-policy algorithm and wait” approach struggles here. The agent’s first instinct is to stand still and draw, because drawing is better than the random-action baseline it gets penalized against. Getting past that local optimum requires deliberate intervention.

Base Algorithm: Twin Delayed DDPG (TD3)

TD3 is an actor-critic algorithm that addresses the well-known overestimation bias of DDPG by maintaining two critics and taking the minimum of their Q-value estimates when computing targets:

It also delays actor updates relative to critic updates (hence “Twin Delayed”), which gives the critics time to stabilize before the policy starts chasing them. I built UlusanTD3 on top of Stable Baselines 3, extending the base TD3 class to support the three techniques described below.

The name UlusanTD3 is chosen purely for convenience of the project graders and easy identification in the codebase. It doesn’t imply any fundamental change to the TD3 algorithm itself, but rather serves as a container for the specific modifications and experiments conducted in this project.

Technique 1: Prioritized Experience Replay

Standard experience replay samples uniformly from a FIFO buffer. PER [Schaul et al., 2015] argues that transitions where the agent was wrong (those with high temporal-difference TD error) are more informative and should be sampled more often. The sampling probability for a transition is:

where controls prioritization strength and is the priority of transition . For TD3 with two critics, I define the priority as the average absolute TD error across both:

To keep priorities tractable and prevent divergence, I clip TD errors to the range . Without this upper bound, a single catastrophic prediction early in training can dominate the buffer forever and destabilize the actor.

Sampling more from high-error transitions introduces a bias, which is corrected with importance-sampling (IS) weights:

These weights are folded into the critic loss, replacing the standard MSE:

Because I have two critics with potentially different scales, I give each its own optimizer rather than summing their losses:

# In UlusanTD3.__init__

if isinstance(self.replay_buffer, PrioritizedExperienceReplayBuffer):

self.critic1_optimizer = th.optim.Adam(

self.critic.q_networks[0].parameters(), lr=learning_rate

)

self.critic2_optimizer = th.optim.Adam(

self.critic.q_networks[1].parameters(), lr=learning_rate

)The PER buffer itself is backed by a SumSegmentTree, which supports O(log N) priority updates and O(log N) stratified sampling. This is essential when the buffer holds a million transitions:

def _sample_indicies_proportional(self, batch_size: int) -> np.ndarray:

p_total = self._td_errors.sum(end=self.size())

segment_length = p_total / batch_size

elem_at_segment_prefixsum = (

np.arange(batch_size) + np.random.uniform(0, 1, batch_size)

) * segment_length

return [

self._td_errors.find_prefixsum_idx(p)

for p in elem_at_segment_prefixsum

]Using a NumPy array in SegmentTree was important because a Python list was too slow for the large buffer size and high update frequency.

After each gradient step, priorities are updated to reflect the latest TD errors:

# Back in the train loop

td_errors = (td_error1 + td_error2) / 2.0

self.replay_buffer.set_priorities(

batch_inds,

td_errors.abs().squeeze().detach().cpu().numpy()

)What actually happened: PER made performance worse in every configuration I tested. More on why below.

Technique 2: Potential-Based Reward Shaping

In environments with sparse rewards, auxiliary signals that encode domain knowledge can accelerate learning without changing the optimal policy. Potential-based reward shaping [Ng et al., 1999] adds a shaping term:

The key property is that this never changes the optimal policy. It only changes how quickly the agent converges to it. The potential function can encode whatever domain knowledge you have.

HockeyEnv conveniently exposes sub-reward components in its info dict. I used a combination of:

closeness_to_puck— reward for staying near the pucktouch_puck— bonus for making contactpuck_direction— reward for hitting the puck toward the opponent’s goal

Two components I tried and removed: centered_puck introduced noise and slowed training, and game_length inadvertently taught the agent to step aside and let in own goals.

def shaped_reward(rewards, infos):

phis = [

(info.get("prev_potential_reward", 0),

info.get("current_potential_reward", 0))

for info in infos

]

# F(s, s') = gamma * phi(s') - phi(s)

return [

r + self.gamma * phi - phi_prev

for r, (phi_prev, phi) in zip(rewards, phis)

]Because HockeyEnv is a fully observable MDP, I compute directly from the environment state on every step, so no approximation is needed.

Technique 3: Multi-Step Returns

Standard TD3 bootstraps one step into the future. In hockey, the decisive action (the puck shot) is made many timesteps before the goal is actually scored, so the one-step target has no way to credit that shot with the eventual reward.

The truncated n-step return addresses this by accumulating rewards forward:

and substituting it into the TD3 target:

With reward shaping combined, the telescoping sum over the potential terms simplifies, and the target becomes:

Implementation-wise, this requires buffering the last transitions before committing any of them to the replay buffer. The buffer is flushed early when a terminal state is reached:

def add(self, obs, next_obs, action, reward, done, infos):

self._reward_info_buffer.append((reward, infos))

if any(done):

# flush remaining transitions on episode end

while len(self._reward_info_buffer) > 0:

self._add_n_step_return(obs, next_obs, action, done, infos)

return

if len(self._reward_info_buffer) < self.n_step_return_num:

return # keep buffering

self._add_n_step_return(obs, next_obs, action, done, infos)The discount exponent in the Bellman target also needs updating to account for the extended horizon:

target_q_values = (

replay_data.rewards

+ (1 - replay_data.dones)

* self.gamma ** self.n_step_return_num # γⁿ instead of γ in TD3

* next_q_values

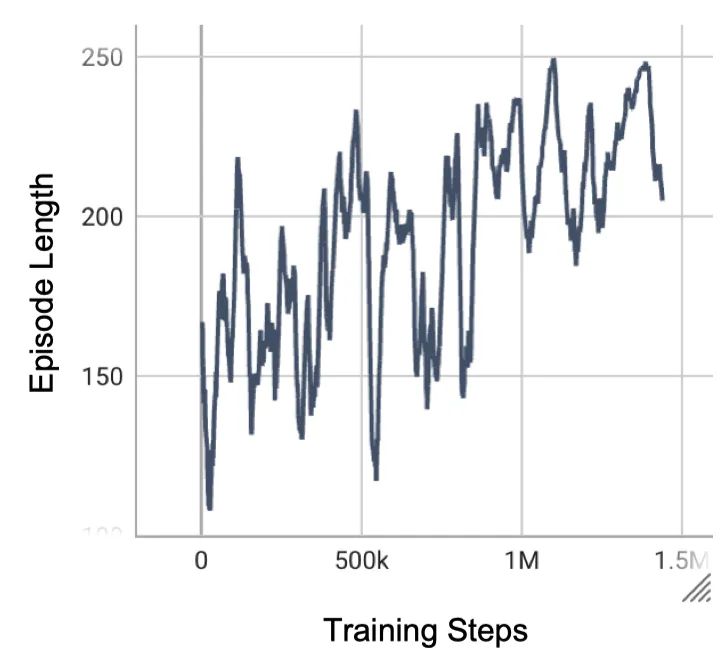

).detach()The Ablation Study: Most Things Didn’t Help

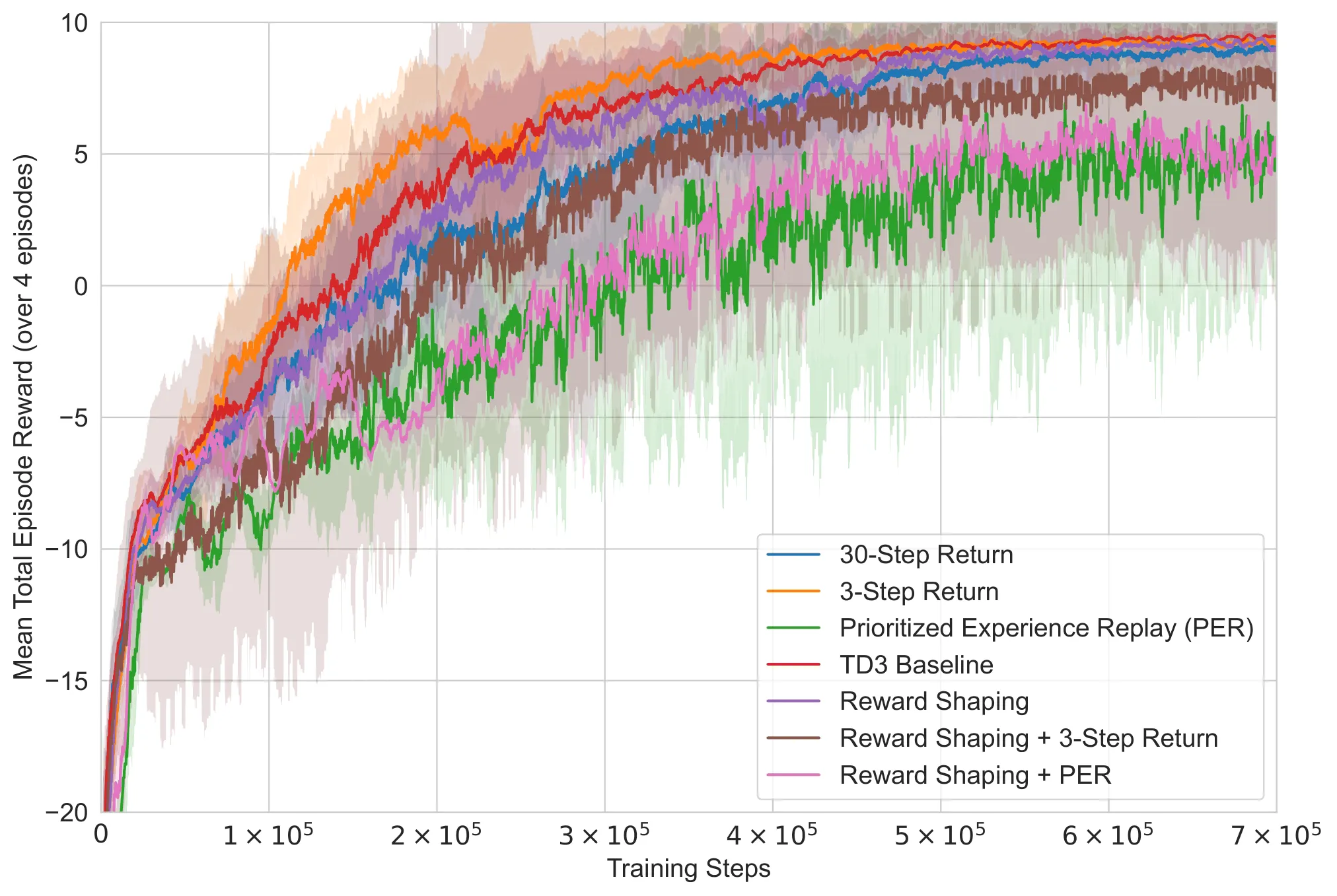

I ran combinations of all three techniques (26 configurations in total, sweeping for multi-step returns) and evaluated each against the weak built-in opponent. The results were not great.

Most modifications performed worse than the TD3 baseline. The 3-step return variant was the only technique that consistently outperformed the baseline, and even that improvement was modest.

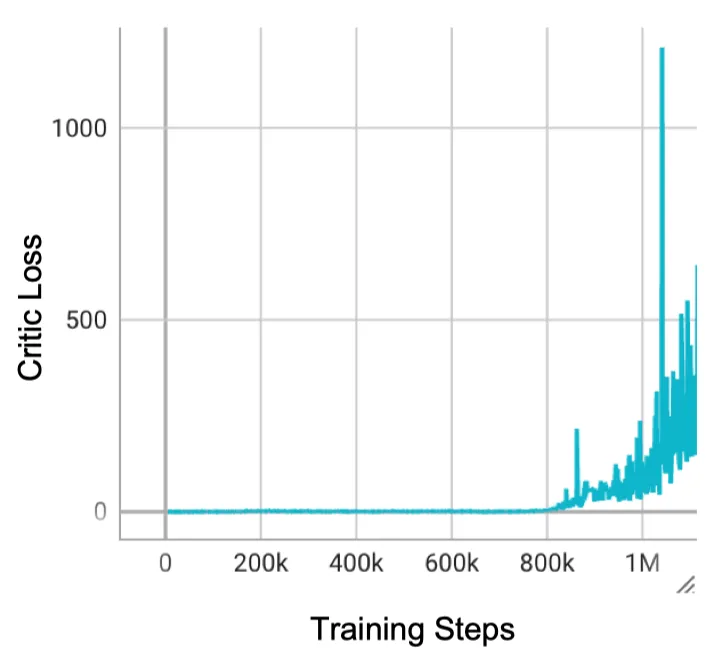

PER failed systematically. Training with IS weights disabled diverged immediately: without correction, the buffer fills with high-error transitions and the critic chases a badly biased distribution. With IS weights enabled, training was stable but still underperformed the baseline.

The failure of PER wasn’t a bug, it was informative. PER’s design assumes a stationary data distribution. When the environment or the opponent changes, that assumption breaks.

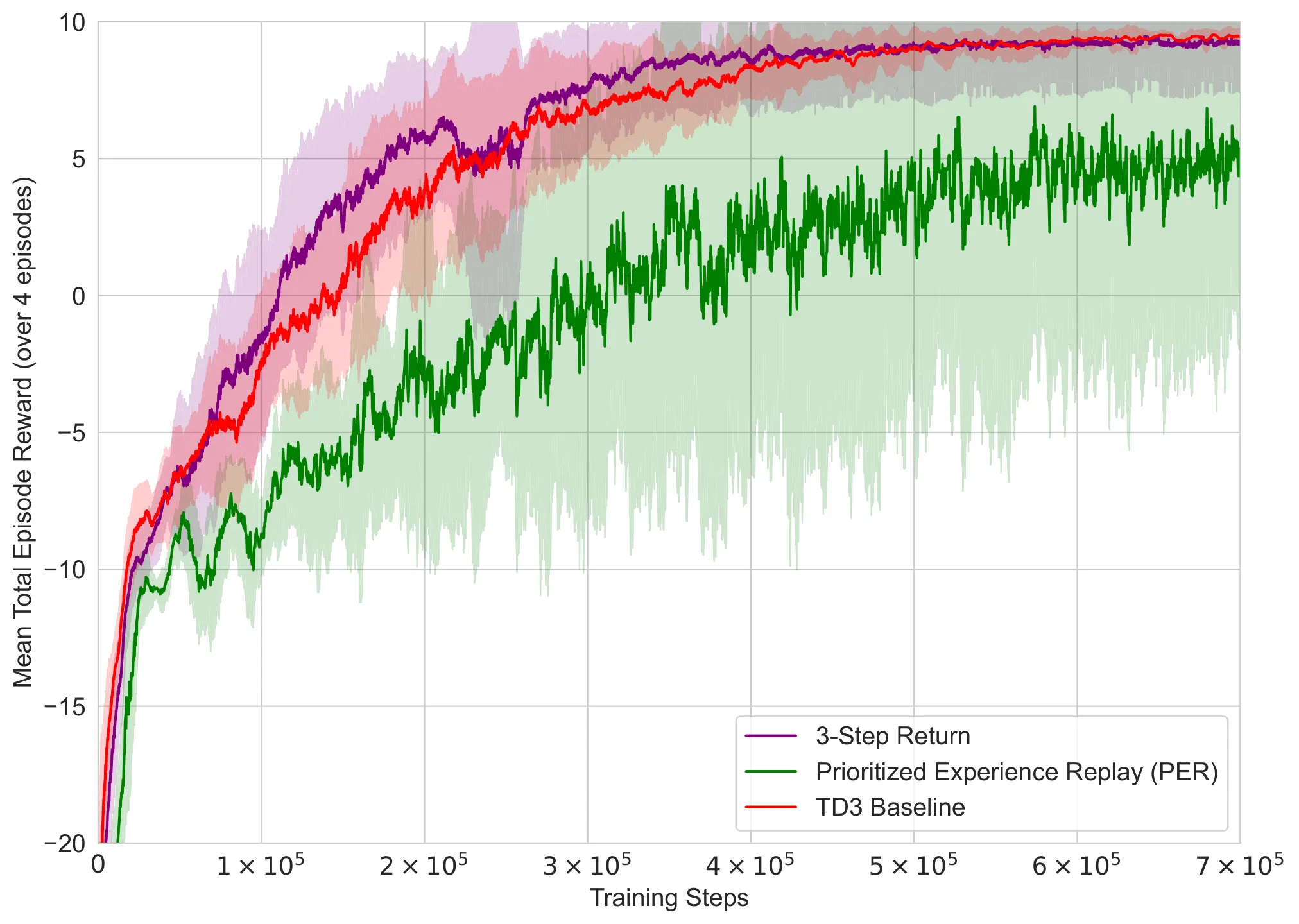

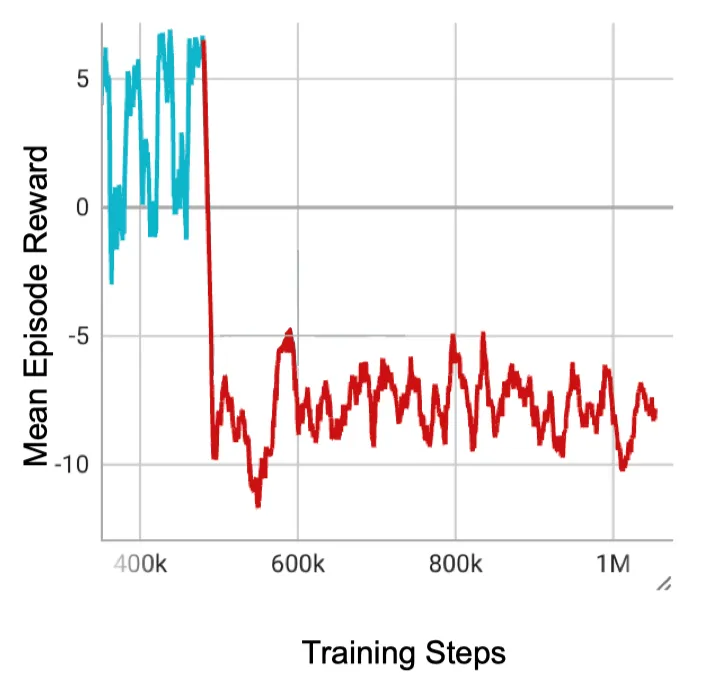

Curriculum Learning: The Thing That Actually Worked

The real insight was about changing how the agent was trained rather than what algorithm it used.

Without guidance, an agent facing a strong opponent quickly figures out that drawing (never scoring, never conceding) is safer than attempting to score. Once it settles into that strategy, it’s hard to unlearn, because the risk of a failed shot (giving the opponent a chance to score) outweighs any expected benefit from trying.

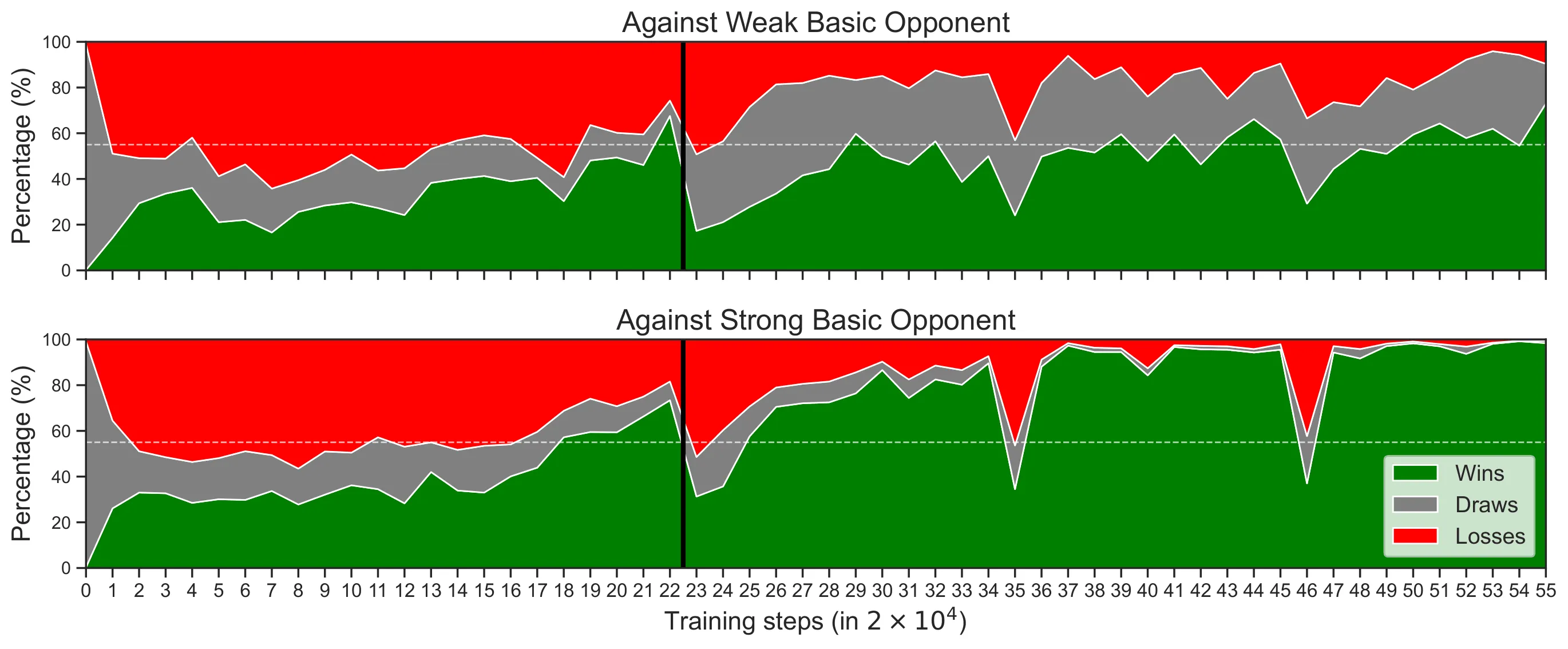

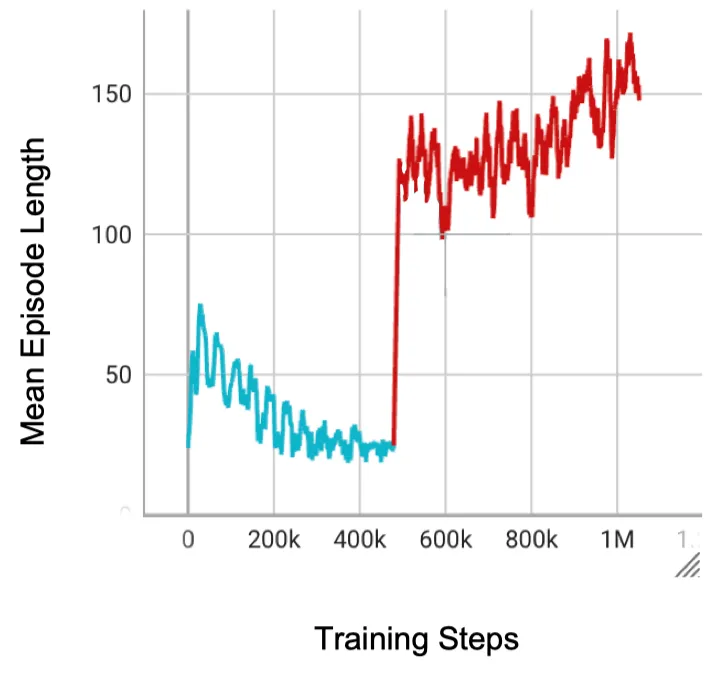

The curriculum I designed breaks this trap in two phases.

Phase 1 (steps 0 to 440k): Alternate every episode between the dedicated shooting mode and defending mode of HockeyEnv. These stripped-down scenarios cut out the full-game complexity and force the agent to develop fundamental skills: aim and shoot; track and block. The episode horizon is only 80 steps, which enables much faster iteration.

Phase 2 (steps 440k+): Empty the replay buffer entirely and switch to training against the strong BasicOpponent in full normal-mode games. The clean buffer prevents old experiences from contaminating the new distribution.

The agent that had been stuck below 50% win rate against the strong opponent reached 98.3% win rate within 110k steps of Phase 2 training. Notably, the win rate against the weak opponent also climbed during Phase 1, even though the agent had never played full games during that phase.

Why PER and Curriculum Don’t Mix

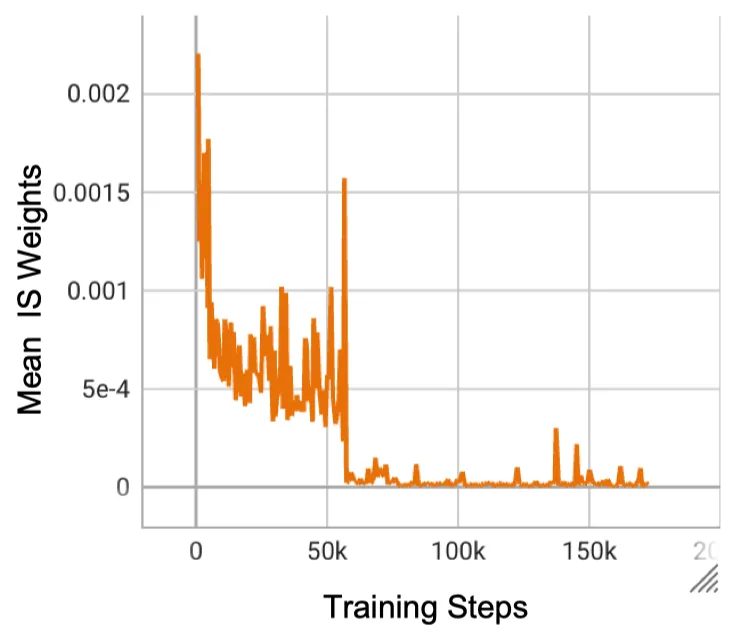

When the curriculum switches from Phase 1 to Phase 2, the replay buffer gets emptied. For standard TD3, this is a clean reset. For PER, it causes problems.

The new transitions in Phase 2 initially have high TD errors (the agent has never seen full-game states before). These saturate the buffer with maximum-priority entries. The IS weights assigned to these transitions drop to near zero, because becomes tiny when a large fraction of transitions share the same maximum priority. The critic is updated on high-error samples with effectively zero weight, which means it barely updates at all. The actor loss then diverges.

As shown in the logic below, when new high-error transitions dominate the distribution, the probability of selecting a new sample becomes very large relative to the small buffer size during the reset, causing the weight to vanish:

When , then .

The critic is updated on high-error samples with effectively zero weight, which means it barely updates at all. Consequently, the actor loss diverges because it is receiving gradients from an unmoving, inaccurate critic.

This is why PER was dropped from the final curriculum configuration entirely.

Self-Play

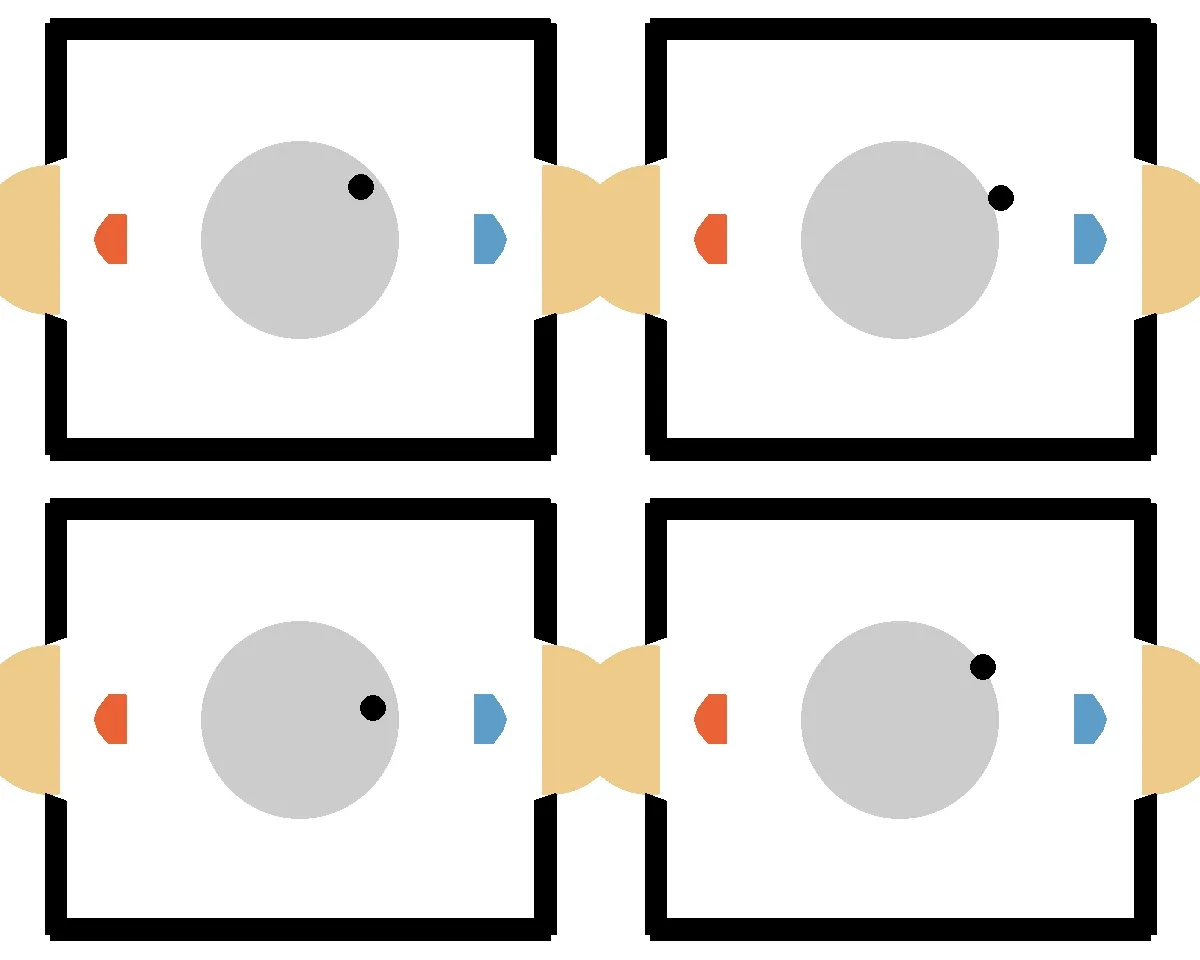

I also explored self-play, training the agent against a pool of its own past checkpoints. The hope was to develop generalization beyond the scripted BasicOpponent.

It didn’t work. The agents converged to a Nash equilibrium of mutual avoidance: both players positioning themselves to not touch the puck rather than risk conceding a goal. Episode lengths climbed toward the 250-step maximum. Once discovered, this drawing strategy was self-reinforcing, because any agent that tried to attack would get punished by an opponent that had learned to exploit aggressive positioning.

Risk aversion dominates in self-play when the stakes are symmetric. The agent’s value function correctly estimates that the expected return from “don’t touch the puck” is higher than the noisy expected return from “attempt a shot.” Injecting BasicOpponent episodes or clearing the buffer when switching opponents did not fix this.

The approach that actually works, from what I heard from peers, is to mix self-play with skill-based training against an easy opponent throughout the whole training run. That way the agent never completely forgets that scoring goals is the point.

Self-play can lead to degenerate equilibria if not carefully structured. In competitive environments, it’s crucial to maintain a curriculum that keeps the agent focused on the ultimate goal rather than settling for safe but unproductive strategies.

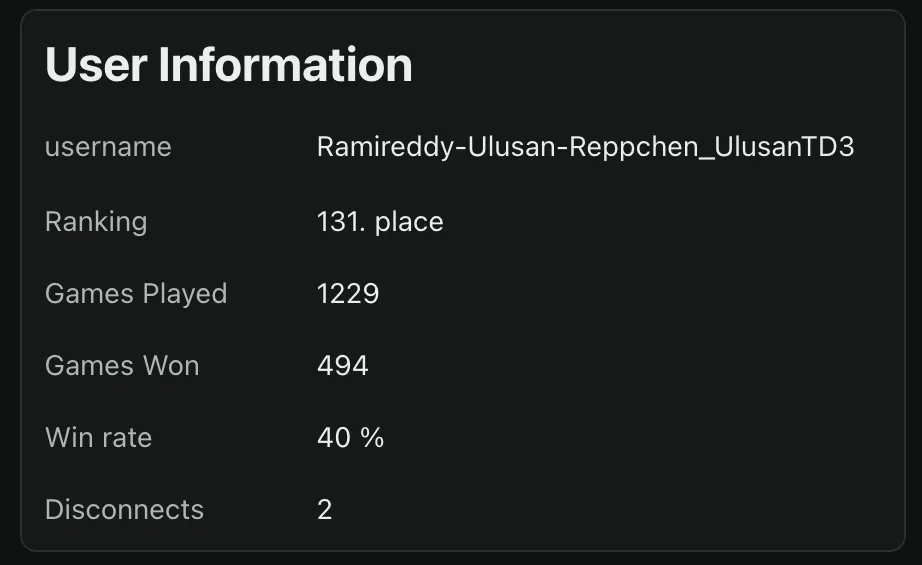

Tournament Results

The final agent (3-step return, curriculum, no PER) competed in the 2025 RL course tournament at the University of Tübingen, ranking 131/146 (including stale accounts) with a 40% win rate against other students’ agents. Some students chose not to join the tournament.

This is a more honest number than the 98.3% against BasicOpponent. My self-play loop didn’t worked because the rewards were very sparse. My peers included other modes in their curriculum, to increase reward signal and prevent the drawing deadlock. In the tournament, RL agents with world models didn’t performed much better than ones like TD3. The real winner move was to train on tournament data to learn the specific quirks of the opponents, which is a form of overfitting but effective in a competitive setting. Yes, it was allowed.

If I included basic defending and shooting modes throughout the self-play phase, tournament performance would have been noticeably better. The agent was robust but never had a complete training setup.

Practical Takeaways

These findings aren’t specific to air hockey.

On PER: It works well in stationary, single-distribution settings. In non-stationary environments (curriculum training, population-based training, anything that changes the data distribution mid-training), the mismatch between stored priorities and the current distribution becomes a liability. Either clear the buffer on every regime change or skip PER in this setting.

On reward shaping: Be selective about what you encode in . Subcomponents that make intuitive sense (stay near the puck) can introduce perverse incentives at the MDP level (game_length rewarding own goals). The sufficiency theorem guarantees no harm asymptotically, but finite training is far from asymptotic.

On multi-step returns: Modest is almost always better than large in continuous control. Large introduces high variance in the return estimate and makes the bootstrap target less reliable.

On self-play: Combine it with skill-based training modes from day one. Self-play alone, starting from scratch, finds the drawing equilibrium before it finds the scoring one.

Conclusion

Adding algorithmic improvements to TD3 mostly made things worse. What actually unlocked real performance was a carefully structured training curriculum: a decision about what the agent practices rather than how it learns.

In sparse-reward environments, the hardest problem is not the algorithm, it’s the training setup. PER, multi-step returns, and reward shaping are all principled ideas, but they operate on data. Curriculum learning shapes what data is generated in the first place.

A stable self-play loop that combines pool-based opponent selection with dedicated skill modes is the most promising direction for pushing these agents further.

Here are the resources if you want to dive deeper:

Acknowledgments

This project was completed as part of the RL Course 2024/25 taught by Prof. Georg Martius at the University of Tübingen, in collaboration with Elia Frederick Reppchen (Rainbow DQN) and ChandraLekha Ramireddy (SAC). Compute was provided by the TCML cluster offered by the Cognitive Systems Group (of Prof. Andreas Zell) at the University of Tübingen.